Some of the new Google Lens features that were announced at Google I/O are now available. I’m seeing them on the Pixel 2 XL and OnePlus 6 sitting here on my desk, so the rollout could be quite wide.

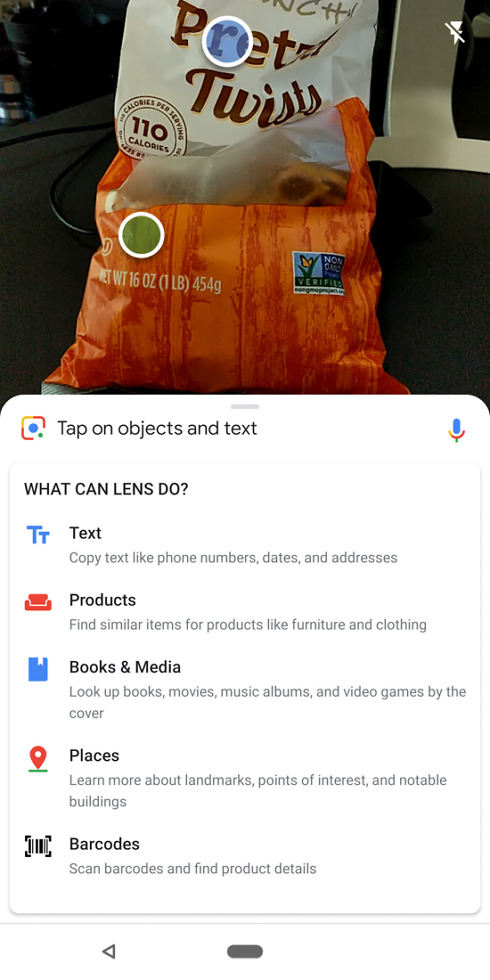

The new features are listed in the screenshots below and include real-time object tracking that can turn into text selections or product matching. This allows you to point your phone and Google Lens at an object and pull text from it, whether that be a phone number, date, address, etc. Along with text selection, Google has added a way to find similar products in front of you (like clothes or furniture) and then shop for them.

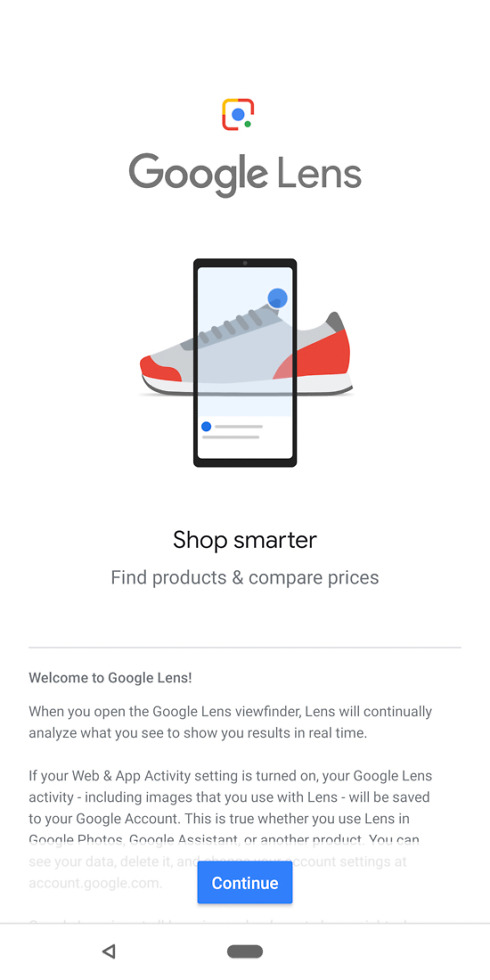

Not only do we have these new features, but Google Lens’ UI has changed some too. When you load it up (open Google Assistant>tap the Lens button) for the first time, you’ll be introduced to the new features as well as a bubbly white bottom-placed card that expands to show you results.

Again, this all seems to be widely rolling out now. Give it a shot!

// 9to5Google