Apple held its WWDC virtual event today and unwrapped iOS 14, the latest version of its mobile operating system for iPhones. As you probably guessed, it takes a lot of ideas from Android, some of which have been on the platform since the beginning (or close to) and that Apple seemed hell-bent on ever adopting. But times change and smartphone operating systems mature, so Apple continuing to acknowledge Android’s greatness is an inevitable yearly event we look forward to.

Rather than put together one of those tired and played-out “Apple just stole everything from Android” posts, I figured we’d do it a bit different today. The world needs more positivity and so here’s a different take. After watching the WWDC stream, I can’t help but tip my cap back at Apple for looking at Android’s ideas and still finding ways to make them their own, or at the very least, make them slightly better in specific ways.

Home Screen, App Library and what appears to be an app drawer

Apple kicked off iOS 14 talk by finally admitting that its app jukebox approach to home screens is a terrible way to organize a phone that has dozens and dozens of apps. To make the experience of managing apps more reasonable, they created the App Library and also will let users eliminate full pages from their home screen. Yes, this is like an Android app drawer and the ability to delete or add pages to your home screen as you see fit.

This is one of those changes that I’m not sure sounds amazing compared to Android, but it is a massive improvement over the previous iOS experience. Now, you have a couple of pages filled with your favorite apps along with a swipe from the right end of your page setup to get into the App Library. This App Library organizes apps into topics or categories, shows you recently used apps in each, and also lets you pull up an entire alphabetical list by tapping in a top search box.

Apple should probably just let you choose the alphabetical list as a default instead of the smart groupings, but hey, it’s a nice start. And maybe you’ll quickly adapt to where each grouping is located in the App Library, making it easier and quicker than ever to find what you need.

Home screen Widgets look nice

Apple is bringing widgets to home screens in their bid to allow users to actually own the experience on their iPhones for the first time. The widgets are just widgets, so that means you can place them anywhere, app shortcuts will smartly move out of the spots you want to place them, and there will be multiple sizes from some apps.

If you are looking for something unique here, it’s probably a widget Apple has called Smart Stack. Smart Stack is a changing widget that shows you information depending on time of day, your usage history, that sort of thing. It has multiple widgets within it, like weather and news and calendars. You can swipe between those as needed or they’ll smartly switch throughout the day (ex: switching from calendar to fitness recap at the end of a day). If you don’t want Apple’s Smart Stack, you’ll actually be able to make your own widget stack of up to 10 widgets (just drag them onto each other).

Overall, the UI is also much nicer than it is on any Android phone, most of which still use awful grid layouts that are sometimes confusing to navigate.

Picture-in-picture has a feature I need

Apple is also introducing picture-in-picture for video, a concept that has been around for some time. For differences here, I like that Apple lets you pinch-to-zoom on the floating picture box to resize it. Google is only now allowing resizing in Android 11 and it’s certainly not as seamless as pinching on the floating box.

The other cool thing is the ability to slide a picture-in-picture window off to the side of the screen (center above), where you’ll still hear audio playing from the clip, it’s just that you may need the full screen for a minute to take care of a task. To bring the video back, you simply swipe out the a little arrow tab for the hidden video.

If you didn’t think the widgets were cool, how can you not be impressed by that?

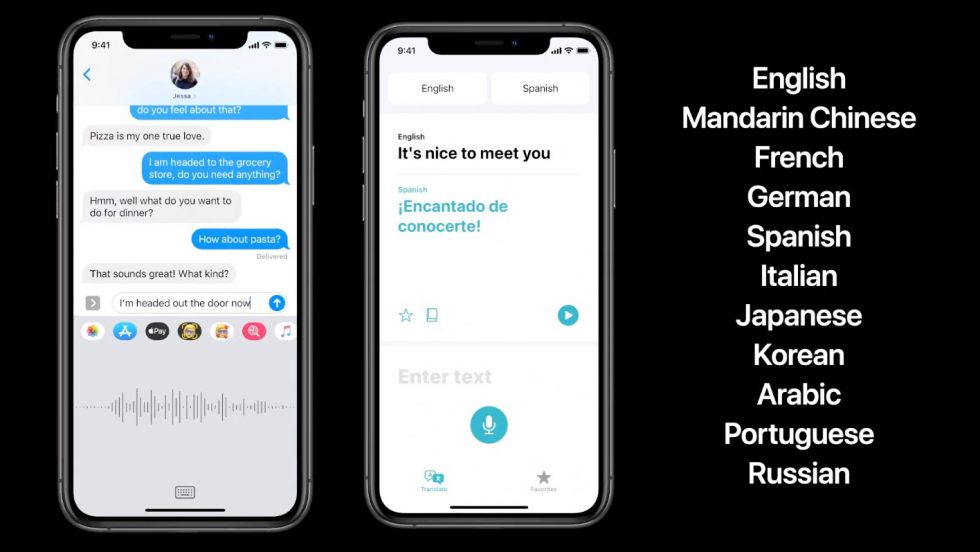

Speech-to-text goes on-device, translated conversations are here

OK, so this stuff isn’t improved over what Google has done, but Apple is now doing it, which is something.

The first thing is that “leveraging the power of the neural engine,” Apple is now transcribing speech-to-text on-device to make it faster, more accurate, etc. They are also working on a new Translate app that lets you have conversations with people in different languages, all of which can happen offline. It’s just like the way Google does it.

Mentions, inline replies, improved visuals in Messages

Talking about Apple Messages (or iMessage) is never a fun topic for Android users. The level of pettiness surrounding cross-platform conversations has always been the stupidest shit ever, but Apple is adding a couple of cool new things to Messages in iOS 14.

Two things that I’m not sure I care much about, but might be huge for you, are threaded or inline conversations and @ mentions. I have zero friends, so my group conversations don’t need to be threaded and I don’t have anyone to callout. I can see how you young social folk would love this stuff, though.

What I do think is cool, just from a UI perspective and an attention to detail thing, is the pinning of conversations and also a group preview or image. For pinning, when you pin a conversation in Messages to the top of your conversations list, you’ll see little profile images of people who have recently sent a message in that group (above right), plus the top of the group image shrinks or enlarges images of people depending on how recently they were active (above left).

Maps isn’t Google Maps, but it’s getting there!

I’m not going to sit here and tell you that Apple Maps is anywhere close to being as good as Google Maps, but here are two cool new features coming to iOS 14:

- Apple Maps is getting a cycling directions experience in NYC, LA, San Francisco, Shanghai, and Beijing. Cool?

- Apple Maps is also getting an EV routing experience that adds charging stops along the route. Very cool.

Yay.

App Clips!

OK, this is most definitely cool. “What are App Clips?” you ask? Umm, it’s like a mash-up of Google’s instant apps, NFC, and the simplest way to do app-related stuff without ever installing the entire app experience of an app you might need that one time. Let me try to explain.

App Clips could be used in a situation where you are at a parking meter that needs a specific app in order for you to pay. You would tap (through NFC) a Clips tag that would then temporarily bring up a specific portion (under 10MB) of that parking app to let you pay to park. The app is never fully installed, it uses your secure login info from Apple to login, and uses Apple Pay to pay. That Clips version of the app then only hangs around “as long as you need them.”

Clips can also be used as shortcuts sent through a message or the web, to help you get businesses info who don’t have an app, or even as a shortcut in something like Apple Maps. Apple is creating a visual Clips icon you’ll look for in the real world to let you know that a Clips experience is available, but QR and NFC works too.

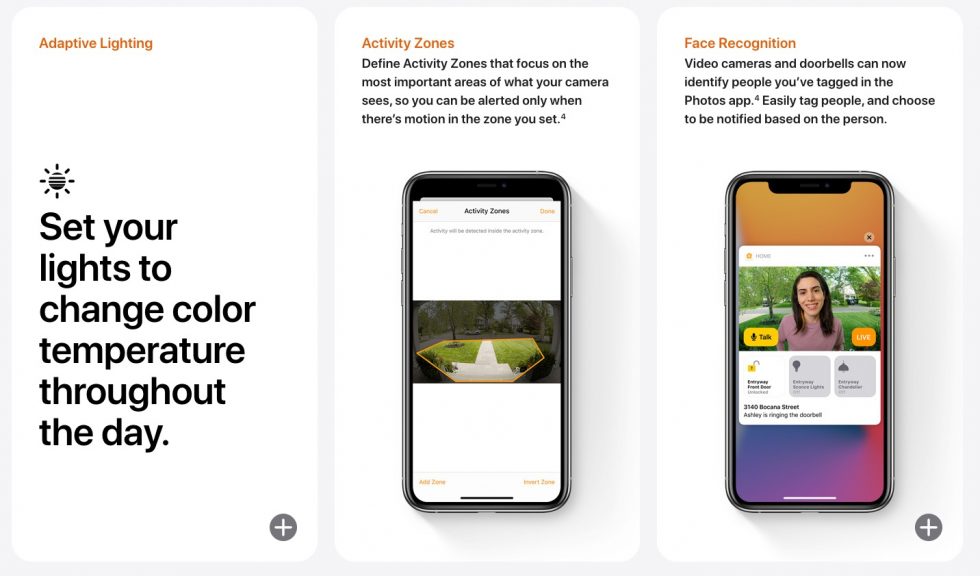

Home stuff

In the home department, Apple is improving their Home app in a number of ways, like through suggested automations of accessories added, better highlighting of accessories that need your attention based on what’s happening (ex: you leaving home), and letting you set supported lights to adapt color temperature throughout the day. Outside of those three features, Apple is doing two other things that I’m confused about because typically these features are paid services as a part of 3rd party smart home products.

The first is an activity zone setting where you can define which areas are important for your home security cameras to watch. The other is a facial recognition thing, where cameras can recognize faces you’ve tagged in Apple Photos and tell you about them. Apple says it’s doing these two things within the Home app, which sure sounds to me like they are getting around subscriptions and pro feature sets from 3rd party services and just giving you those goods. I hope I’m wrong there, because that seems like some lawsuit level stuff.

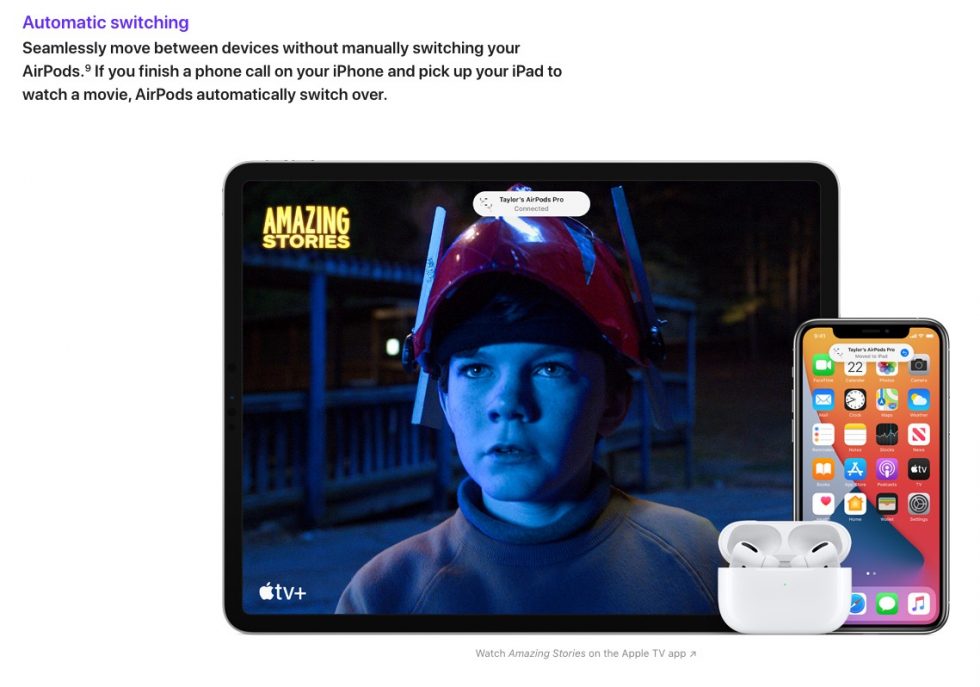

AirPods or auto-audio switching between devices

Imagine if you got a call on your Android phone which was connected to Pixel Buds, took that call, then hung-up and your Pixel Buds immediately switched over to your Pixel Slate because you picked it up and started using it. Would that be sweet? iOS 14 combined with an iPhone, iPad, and AirPods does that. You got us on this one, Apple.

And that’s just a look at the big stuff that Apple talked in detail about, so there could be more. Again, the point here wasn’t to show you all of the stuff that Apple had copied. In some ways, Apple (certainly) borrowed ideas from Android and then further improved upon them in ways you have to hope Google will take note of.

Oh, iOS 14 is supported back through the iPhone 6s too. Google, can we work on that too?